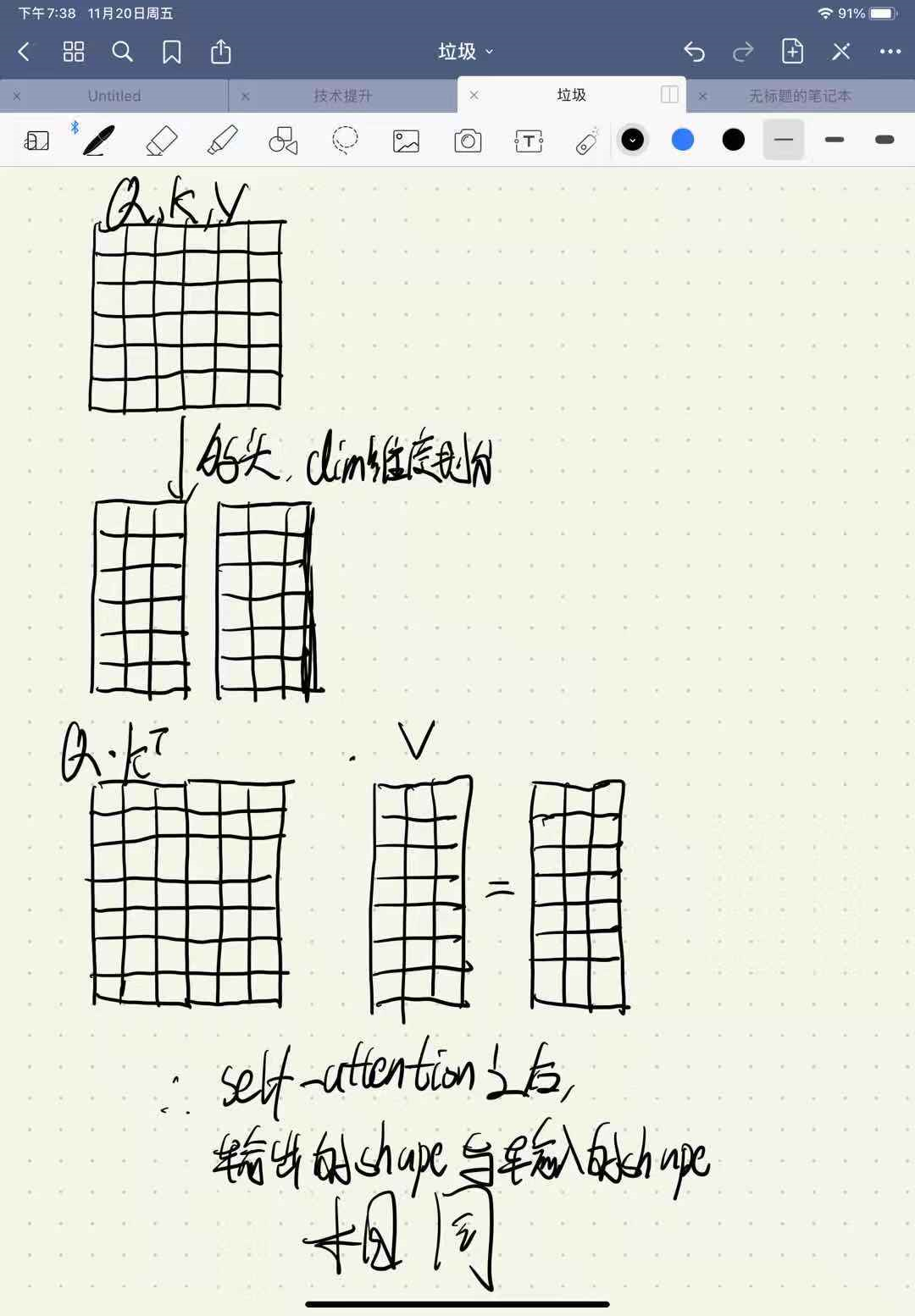

Since we needed to pass the initial input X onto a future layer, we stored its value in X2. In the above code, we can see that we have separate attributes for the different layers and we have customized our forward function a bit. This is because our forward function needs to be customized to accommodate skip connections! This time using functions for creating blocks won't cut it anymore. In these types of networks, we need to have a reference to these layers that have skip connections(which you will see later on). Residual networks are designed a bit differently to cater to this feature. Image taken from deeplearning.ai Convolutional neural networks course A few examples of residual networks are ResNets, and UNet.įigure 2. This has been shown to help deep neural networks learn better as gradients can more readily flow through these connections. An improvement from this are residual networks, these networks pass the output from an earlier layer onto a layer a few steps ahead forming a “skip connection”. What we’ve encountered so far are relatively simple networks, they take in the output of the previous layer and transform it. We now have recreated the VGG model! How cool is that. (4): MaxPool2d(kernel_size=(2, 2), stride=2, padding=0, dilation=1, ceil_mode=False) (2): MaxPool2d(kernel_size=(2, 2), stride=2, padding=0, dilation=1, ceil_mode=False) Image taken from this datasciencecentral article. The two types of VGG Blocks: the two-layer(blue, orange) and the three-layer ones(purple, green, red). VGG has two types of blocks, defined in this paper.įigure 1. VGG Networks were one of the first deep CNNs ever built! Let’s try to recreate it. Phew! This saved us from defining these 9 individual layers one by one. We can actually see that we have these repeating blocks. (1): BatchNorm1d(1, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) (0): Linear(in_features=5, out_features=1, bias=True) (1): BatchNorm1d(5, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(0): Linear(in_features=4, out_features=5, bias=True)

(1): BatchNorm1d(4, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True) (0): Linear(in_features=10, out_features=4, bias=True) Enter fullscreen mode Exit fullscreen mode my_net ) # Linear(in_features=1000, out_features=100, bias=True) torch. manual_seed ( 0 ) net_nested_seq = NetNestedSeq () print ( net_nested_seq ) # NetNestedSeq( # (my_net): Sequential( # (0): Linear(in_features=1000, out_features=100, bias=True) # (1): ReLU() # (2): Dropout(p=0.2, inplace=False) # ) # (fc): Linear(in_features=100, out_features=10, bias=True) # ) print ( net_nested_seq. Linear ( 100, 10 ) def forward ( self, x ): x = self.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed